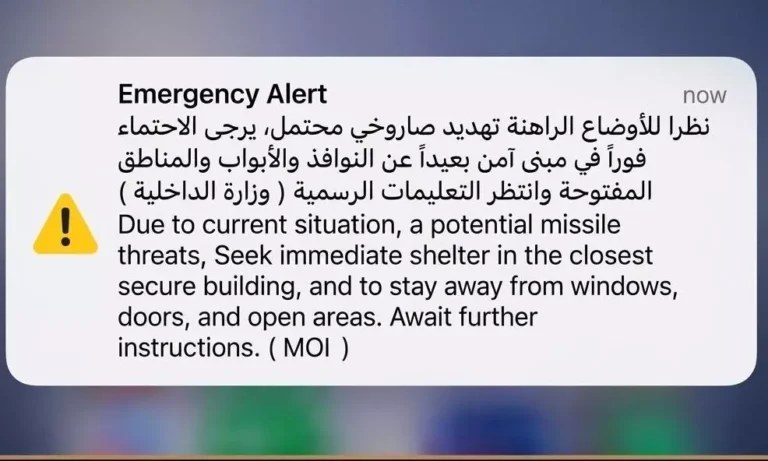

New U.S laws designed to protect minors are pulling millions of adult Americans into mandatory age-verification gates to access online content, leading to backlash from users and criticism from privacy advocates that a free and open internet is at stake. Roughly half of U.S. states have enacted or are advancing laws requiring platforms — including adult content sites, online gaming services, and social media apps — to block underage users, forcing companies to screen everyone who approaches these digital gates.

“There’s a big spectrum,” said Joe Kaufman, global head of privacy at Jumio, one of the largest digital identity-verification and authentication platforms. He explained that the patchwork of state laws vary in technical demands and compliance expectations. “The regulations are moving in many different directions at once,” he said.

Social media company Discord announced plans in February to roll out mandatory age verification globally, which the company said would rely on verification methods designed so facial analysis occurs on a user’s device and submitted data would be deleted immediately. The proposal quickly drew backlash from users concerned about having to submit selfies or government IDs to access certain features, which led Discord to delay the launch until the second half of this year.

“Let me be upfront: we knew this rollout was going to be controversial. Any time you introduce something that touches identity and verification, people are going to have strong feelings,” Discord chief technology officer and co-founder Stanislav Vishnevskiy wrote in a Feb. 24 blog post.

Websites offering adult content, gambling, or financial services often rely on full identity verification that requires scanning a government ID and matching it to a live image. But most of the verification systems powering these checkpoints — often run by specialized identity-verification vendors on behalf of websites — rely on artificial intelligence such as facial recognition and age-estimation models that analyze selfies or video to determine in seconds whether someone is old enough to access content. Social media and lower-risk services may use lighter estimation tools designed to confirm age without permanently storing detailed identity records.

Vendors say a challenge is balancing safety with how much friction users will tolerate. “We’re in the business of ensuring that you are absolutely keeping minors safe and out and able to let adults in with as little friction as possible,” said Rivka Gerwitz Little, chief growth officer at identity-verification platform Socure. Excessive data collection, she added, creates friction that users resist.

Still, many users perceive mandatory identity checks as invasive. “Having another way to be forced to provide that information is intrusive to people,” said Heidi Howard Tandy, a partner at Berger Singerman who specializes in intellectual property and internet law. Some users may attempt workarounds — including prepaid cards or alternative credentials — or turn to unauthorized distribution channels. “It’s going to cause a piracy situation,” she added.

Where adult data goes

In many implementations, verification vendors — not the websites themselves — process and retain the identity information, returning only a pass-fail signal to the platform.

Gerwitz Little said Socure does not sell verification data and that in lightweight age-estimation scenarios, where platforms use quick facial analysis or other signals rather than government documentation, the company may store little or no information. But in fuller identity-verification contexts, such as gaming and fraud prevention that require ID scans, certain adult verification records may be retained to document compliance. She said Socure can keep some adult verification data for up to three years while following applicable privacy and purging rules.

Civil liberties’ advocates warn that concentrating large volumes of identity data among a small number of verification vendors can create attractive targets for hackers and government demands. Earlier this year, Discord disclosed a data breach that exposed ID images belonging to approximately 70,000 users through a compromised third-party service, highlighting the security risks associated with storing sensitive identity information.

In addition, they warn that expanding age-verification systems represent not only a usability challenge but a structural shift in how identity becomes tied to online behavior. Age verification risks tying users’ “most sensitive and immutable data” — names, faces, birthdays, home addresses — to their online activity, according to Molly Buckley, a legislative analyst at the Electronic Frontier Foundation. “Age verification strikes at the foundation of the free and open internet,” she said.

Even when vendors promise to safeguard personal information, users ultimately rely on contractual terms they rarely read or fully understand. “There’s language in their terms-of-use policies that says if the information is requested by law enforcement, they’ll hand it over. They can’t confirm that they will always forever be the only entity who has all of this information. Everyone needs to understand that their baseline information is not something under their control,” Tandy said.

As more platforms route age checks through third-party vendors, that concentration of identity data is also creating new legal exposure for the companies that rely on them. “A company is going to have some of that information passing through their own servers,” Tandy said. “And you can’t offload that kind of liability to a third party.”

Companies can distribute risk through contracts and insurance, she said, but they remain responsible for how identity systems interact with their infrastructure. “What you can do is have really good insurance and require really good insurance from the entities that you’re contracting with,” she said.

Tandy also cautioned that retention promises can be more complex than they appear. “If they say they’re holding it for three years, that’s the minimum amount of time they’re holding it for,” she said. “I wouldn’t feel comfortable trusting a company that says, ‘We delete everything one day after three years.’ That is not going to happen,” she added.

Legal battles are not over

Federal and state regulators argue that age-verification laws are primarily a response to documented harms to minors and insist the rules must operate under strict privacy and security safeguards.

An FTC spokesperson told CNBC that companies must limit how collected information is used. While age-verification technologies can help parents protect children online, the agency said firms are still bound by existing consumer protection rules governing data minimization, retention, and security. The agency pointed to existing rules requiring firms to retain personal information only as long as reasonably necessary and to safeguard its confidentiality and integrity.

CNBC